OCP 4

- OpenShift Container Platform 4 (OCP 4)

- Downloads

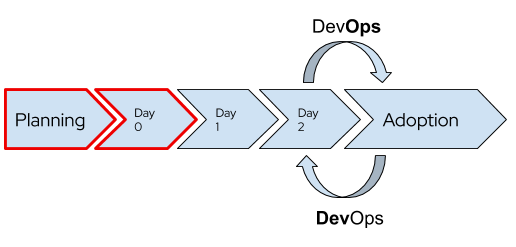

- OpenShift End-to-End. Day 0, Day 1 \& Day 2

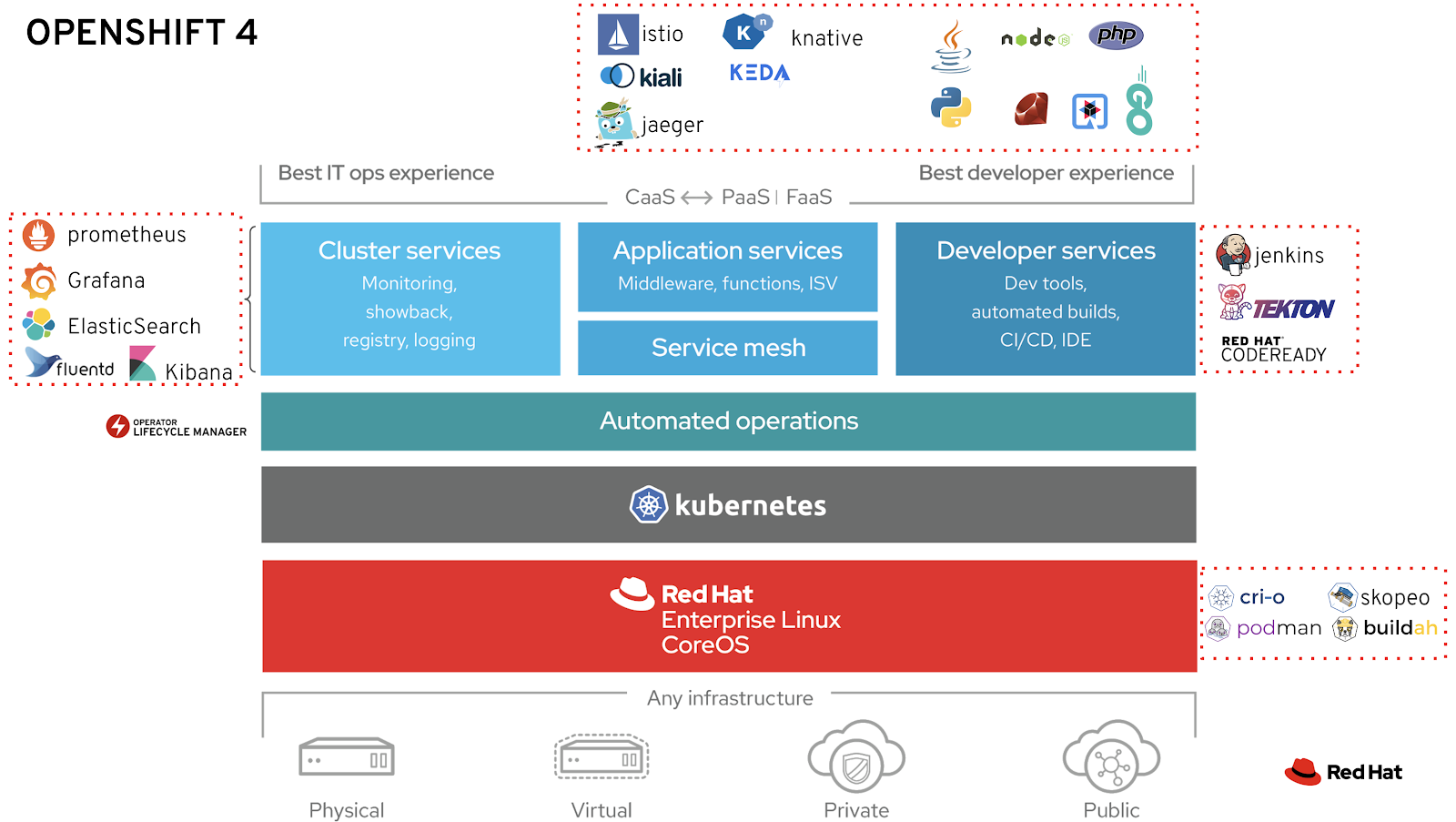

- OCP 4 Overview

- Three New Functionalities

- New Technical Components

- Installation and Cluster Autoscaler

- Cluster Autoscaler Operator

- Operators

- Monitoring and Observability

- Build Images. Next-Generation Container Image Building Tools

- OpenShift Registry and Quay Registry

- Local Development Environment

- GitOps Catalog

- OpenShift on Azure

- OpenShift Youtube

- OpenShift 4 Training

- OpenShift 4 Roadmap

- Kubevirt Virtual Machine Management on Kubernetes

- Networking and Network Policy in OCP4. SDN/CNI plug-ins

- Storage in OCP 4. OpenShift Container Storage (OCS)

- Red Hat Advanced Cluster Management for Kubernetes

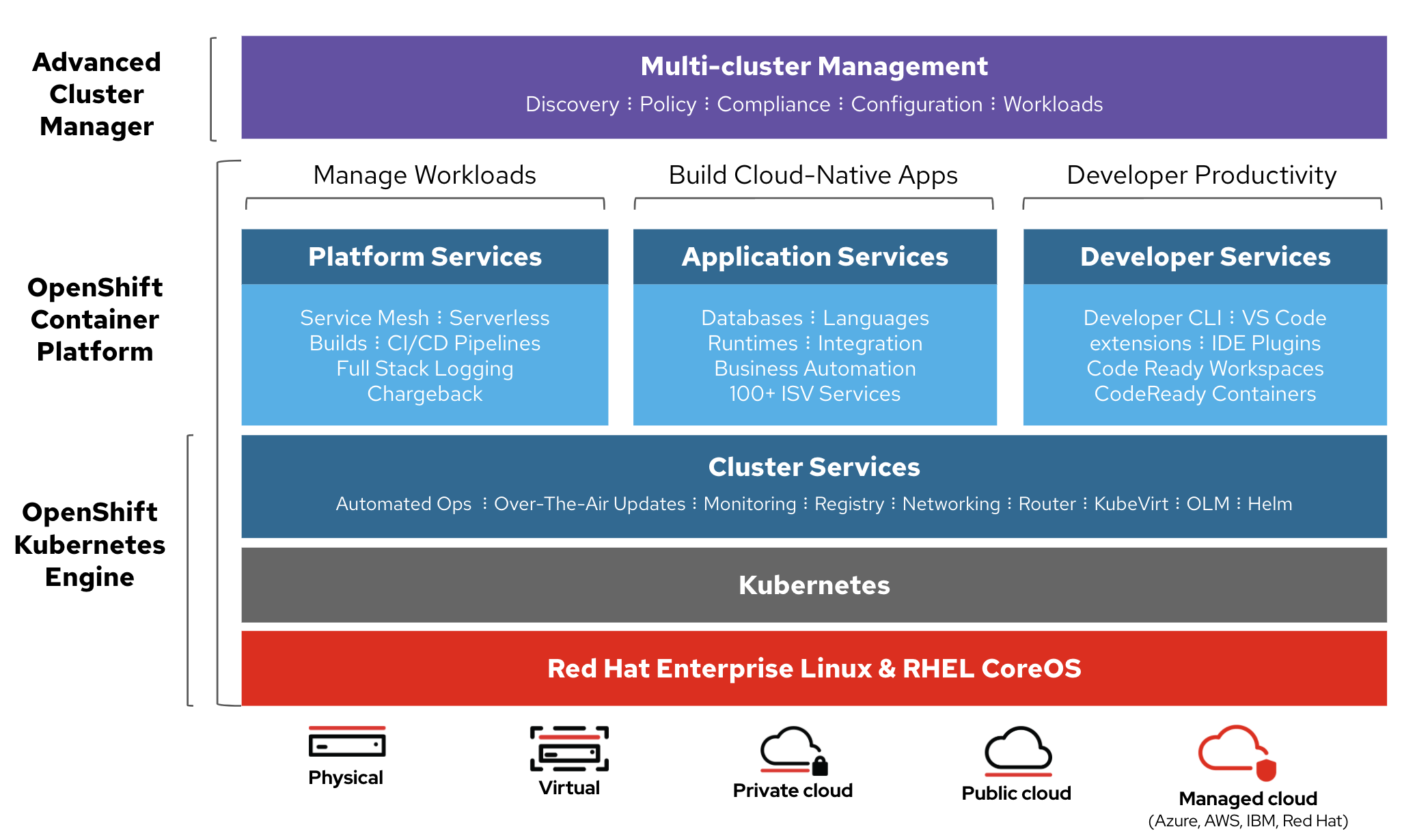

- OpenShift Kubernetes Engine (OKE)

- Red Hat CodeReady Containers. OpenShift 4 on your laptop

- OpenShift Hive: Cluster-as-a-Service. Easily provision new PaaS environments for developers

- OpenShift 4 Master API Protection in Public Cloud

- Backup and Migrate to OpenShift 4

- OKD4. OpenShift 4 without enterprise-level support

- OpenShift Serverless with Knative

- Helm Charts and OpenShift 4

- Red Hat Marketplace

- Kubestone. Benchmarking Operator for K8s and OpenShift

- OpenShift Cost Management

- Operators in OCP 4

- Quay Container Registry

- Application Migration Toolkit

- Developer Sandbox

- OpenShift Topology View

- OpenBuilt Platform for the Construction Industry

- OpenShift AI

- Scripts

- Slides

- Tweets

- Videos

OpenShift Container Platform 4 (OCP 4)

- blog.openshift.com: Introducing Red Hat OpenShift 4

- nextplatform.com: red hat flexes CoreOS muscle in openshift kubernetes platform

- OpenShift 4 documentation 🌟

- Dzone: What’s in OpenShift 4?

- blog.openshift.com: OpenShift 4 Install Experience

- operatorhub.io OperatorHub.io is a new home for the Kubernetes community to share Operators. Find an existing Operator or list your own today.

- cloudowski.com: Honest review of OpenShift 4 🌟

- Enabling OpenShift 4 Clusters to Stop and Resume Cluster VMs

- blog.openshift.com: Simplifying OpenShift Case Information Gathering Workflow: Must-Gather Operator (In the context of Red Hat OpenShift 4.x and Kubernetes, it is considered a bad practice to ssh into a node and perform debugging actions) 🌟

- blog.openshift.com: Configure the OpenShift Image Registry backed by OpenShift Container Storage

- blog.openshift.com: OpenShift Scale: Running 500 Pods Per Node 🌟

- blog.openshift.com: Enterprise Kubernetes with OpenShift (Part one) 🌟

- devclass.com: OpenShift 4.4 goes all out on mixed workloads, puts observability at devs’ fingertips 🌟

- OpenShift 4.5: Node Improvements

- OpenShift 4.5: Red Hat takes Kubernetes to the cloud’s edge Red Hat agrees that edge computing is the future and it’s getting ready for this next stage in cloud computing with its latest OpenShift release.

- Fully Automated OpenShift Deployments With VMware vSphere

- OpenShift 4 “under-the-hood” 🌟

- thenewstack.io: Red Hat Launches an OpenShift-Based Marketplace to Aid Multicloud Portability 🌟

- openshift.com: OpenShift UPI using static IPs

- developers.redhat.com: OpenShift for Kubernetes developers: Getting started 🌟

- developers.redhat.com: Command-line cluster management with Red Hat OpenShift’s new web terminal (tech preview)

- Improved tooling and best practices to help you migrate to OpenShift 4

- openshift.com: OpenShift Architectures for the Edge With OpenShift 4.6

- dzone refcard: Getting Started With OpenShift 🌟

- openshift.com: Nested OpenShift using OpenShift Virtualization

- developers.redhat.com: Deploying Kubernetes Operators with Operator Lifecycle Manager bundles

- openshift.com: 8 Answers to 7 OpenShift Questions 🌟

- openshift.com: Red Hat OpenShift 4.7 Is Now Available

- Kubernetes 1.20

- Updated OpenShift Virtualization

- Virtualization Migrations

- Windows Containers on vSphere

- Simplified Bare Metal installs

- Horizontal Pod Autoscaler (CPU & Memory)

- New RHACM integrations

- and much, much more!!

- zdnet.com: Red Hat opens the door for both VMs and containers in its latest OpenShift release Red Hat’s OpenShift 4.7 can help you manage your entire cloud application stack.

- finance.yahoo.com: IBM’s Red Hat OpenShift Platform to be Leveraged by Siemens

- openshift.com: How to Offer Service Running on OpenShift on AWS to Other AWS VPCs, Privately 🌟

- developers.redhat.com: A guide to Red Hat OpenShift 4.5 installer-provisioned infrastructure on vSphere 🌟

- openshift.com: OpenShift Security Best Practices for Kubernetes Cluster Design 🌟

- fiercetelecom.com: Red Hat bundles security, management into OpenShift Plus IBM subsidiary Red Hat put its recently acquired StackRox assets to work, rolling out a new version of its OpenShift cloud platform that incorporates security, cluster management and registry capabilities in a single package.

- openshift.com: Descheduler GA in OpenShift 4.7 🌟 The Descheduler is an upstream Kubernetes subproject owned by SIG-Scheduling. Its purpose is to serve as a complement to the stock kube-scheduler, which assigns new pods to nodes based on the myriad criteria and algorithms it provides.

- opensourcerers.org: Automated Application Packaging and Distribution with OpenShift – Part ½ Learn how to automate application deployment and packaging with openshift and tools like source-to-image, templates, kustomize.

- openshift.com: How to Configure LDAP Sync With CronJobs in OpenShift 🌟

- schabell.org: How to setup the OpenShift Container Platform 4.7 on your local machine

- developers.redhat.com: Containerize .NET for Red Hat OpenShift: Use a Windows VM like a container

- openshift.com: A Brief Introduction to Red Hat Advanced Cluster Security for Kubernetes

- openshift.com: Customizing Virtual Machine Templates in OpenShift

- thenewstack.io: Red Hat OpenShift 4.8 Adds Serverless Functions, Pipelines-As-Code

- itprotoday.com: With OpenShift 4.8, Red Hat Seeks to ‘Expand Workload Possibilities’ With OpenShift 4.8, Red Hat seeks to simplify the developer experience and to expand use cases and workload possibilities.

- openshift.com: Strategies for Moving .NET Workloads to OpenShift Container Platform

- openshift.com: Ask an OpenShift Admin Office Hour - Authentication and Authorization

- openshift.com: Workload Support for Red Hat OpenShift Matures Across the Industry

- blog.byte.builders: Manage MongoDB in Openshift Using KubeDB

- developers.redhat.com: Troubleshooting application performance with Red Hat OpenShift metrics, Part 1: Requirements

- openshift.com: OCP Disaster Recovery Part 3: Recovering an OpenShift 4 IPI cluster With the Loss of Two Master Nodes 🌟

- openshift.com: OpenShift on ARM Developer Preview Now Available for AWS

- cloud.redhat.com: Changes coming for OpenShift.com and Cloud.Redhat.com We are moving! On July 29th, we will move OpenShift.com content into the RedHat.com domain. The console applications currently at Cloud.RedHat.com will move to a new URL at console.redhat.com. All current URLs and bookmarks will redirect to their new destinations. This change will make RedHat.com a one-stop destination for all our hybrid cloud and simplify your experience.

- developers.redhat.com: Troubleshooting application performance with Red Hat OpenShift metrics, Part 4: Gathering performance metrics

- cloud.redhat.com: Red Hat OpenShift 4.8 Is Now Generally Available

- zdnet.com: Qualys partners with Red Hat to improve Linux and Kubernetes security Security company Qualys is partnering with Red Hat to bring built-in Cloud Agent security to Red Hat Enterprise Linux CoreOS and Red Hat OpenShift.

- cloud.redhat.com: Getting Started in OpenShift 🌟

- redhat.com: Simplify application management in Kubernetes environments (ebook) Automation is at the core of cloud-native application development and deployment. Helm and Kubernetes Operators are two popular choices for automating the management of application and infrastructure software within your Kubernetes environment. These tools help to improve developer productivity, simplify application deployment and streamline updates and upgrades. Red Hat OpenShift supports both of these automation technologies, helping you to increase speed, efficiency, and scale and deliver a better user experience.

- cloud.redhat.com: OpenShift Sandboxed Containers 101 🌟

- thenewstack.io: IBM, Red Hat Bring Load-Aware Resource Management to Kubernetes

- kubernetes-sigs: Trimaran: Load-aware scheduling plugins 🌟 Trimaran is a collection of load-aware scheduler plugins

- developers.redhat.com: Composable software catalogs on Kubernetes: An easier way to update containerized applications

- cloud.redhat.com: Announcing Bring Your Own Host Support for Windows nodes to Red Hat OpenShift

- cloud.redhat.com: OpenShift Sandboxed Containers Operator From Zero to Hero, the Hard Way. The Operator Framework and Its Usage

- developers.redhat.com: Get started with OpenShift Service Registry

- cloud.redhat.com: Red Hat OpenShift 4.9 Is Now Generally Available

- redhat.com: Meet single node OpenShift: Our newest small OpenShift footprint for edge architectures

- cloud.redhat.com: How to Build a Disconnected OpenShift Cluster With Mirror Registries on RHEL CoreOS Using Podman and Systemd

- github.com/openshift/hypershift: HyperShift Hyperscale OpenShift - clusters with hosted control planes. HyperShift is a middleware for hosting OpenShift control planes at scale that solves for cost and time to provision, as well as portability cross cloud with strong separation of concerns between management and workloads. Clusters are fully compliant OpenShift Container Platform (OCP) clusters and are compatible with standard OCP and Kubernetes toolchains.

- michaelkotelnikov.medium.com: Managing Network Security Lifecycles in Multi Cluster OpenShift Environments with OpenShift Platform Plus In this article, you will learn how the tools in the OpenShift Platform Plus bundle help an organization maintain and secure network traffic flows in multi cluster OpenShift environments.

- medium.com/@shrishs: Application Backup and Restore using Openshift API for Data Protection(OADP)

- dev.to: Deep Dive into AWS OIDC identity provider when installing OpenShift using manual authentication mode with STS

- venturebeat.com: Red Hat gives an ARM up to OpenShift Kubernetes operations

- redhat.com: Planning your migration from Red Hat OpenShift 3 to 4 With OpenShift 3 nearing its end of life, now is the time to start planning your migration to OpenShift 4. These three steps will ease the journey.

- redhat.com: Red Hat OpenShift Platform Plus

- blog.knell.it: Commands Kubernetes should adopt from Red Hat OpenShift Working with Kubernetes would become easier and more efficient with support for these handy OpenShift commands.

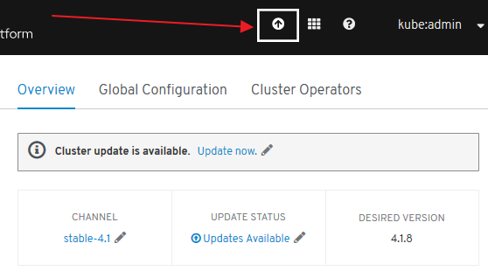

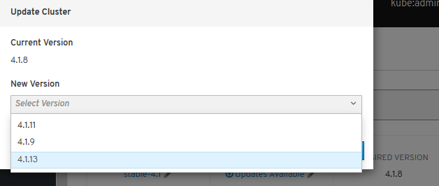

- mkdev.me: How to upgrade Openshift 4.x 🌟

OpenShift Guide

- mikeroyal/OpenShift-Guide: OpenShift Guide 🌟🌟 A guide for getting started with OpenShift including the Tools and Applications that will make you a better and more efficient engineer with OpenShift.

Single Node OpenShift

OpenShift sizing and subscription guide

OpenShift Platform Plus

- Red Hat OpenShift Platform Plus 🌟 Build, deploy, run, manage, and secure intelligent applications at scale across the hybrid cloud.

- thenewstack.io: Red Hat Offers a ‘Complete Kubernetes Stack’ with OpenShift Platform Plus 🌟

Best Practices

- developers.redhat.com - Best practices: Using health checks in the OpenShift 4.5 web console 🌟 3 types of health checks offered in OpenShift 4.5 to improve application reliability and uptime

- redhat-cop.github.io: Best practices for migrating from OpenShift Container Platform 3 to 4 🌟 This guide provides recommendations and best practices for migrating from OpenShift Container Platform 3.9+ to OpenShift 4.x with the Migration Tookit for Containers (MTC).

- openshift.com: Applications Here, Applications There! - Part 3 - Application Migration Application Migration on Advanced Cluster Management

- openshift-yolo OpenShift CronJob to check if updates are available, and if so, upgrade the cluster to the latest version.

Setting up OCP4 on AWS

- AWS Account Set Up 🌟).

- OpenShift 4 on AWS Quick Starts 🌟

- openshift.com: Control Regional Access to Your Service on OpenShift Running on AWS

- cloud.redhat.com: OpenShift Virtualization on Amazon Web Services Of the many selling points for OpenShift, one of the biggest is its ability to provide a common platform for workloads whether they are on premise or at one of the major cloud providers. With the availability of AWS bare metal instance types, Red Hat has announced that OpenShift 4.9 supports OpenShift Virtualization on AWS as a tech preview. Now virtual machines can be managed in much the same way in the cloud as on-premise.

ROSA Red Hat OpenShift Service on AWS

- redhat.com: Amazon and Red Hat Announce the General Availability of Red Hat OpenShift Service on AWS (ROSA)

- amazon.com: Red Hat OpenShift Service on AWS Now GA

- infoq.com: AWS Announces the General Availability of the Red Hat OpenShift Service on AWS

- datacenterknowledge.com: Red Hat Brings Its Managed OpenShift Kubernetes Service to AWS

- aws.amazon.com: Red Hat OpenShift Service on AWS: architecture and networking

- openshift.com: Using VPC Peering to Connect an OpenShift Service on an AWS (ROSA) Cluster to an Amazon RDS MySQL Database in a Different VPC

- blog.vizuri.com: Red Hat OpenShift Service on AWS (ROSA) Positions OpenShift for Mainstream Adoption

- cloud.redhat.com: Scale your application containers on Red Hat OpenShift Service on AWS (ROSA) clusters using Amazon EFS storage

- redhat.com: Red Hat OpenShift Service on AWS with hosted control planes now available Having the control plane hosted and managed in a ROSA service AWS account rather than the customer’s individual account provides more effective and efficient use of resources.

CI/CD in OpenShift

Downloads

OpenShift End-to-End. Day 0, Day 1 & Day 2

- OpenShift End-to-End: Day 0 - Plan and Deploy

- OpenShift End-to-End: Day 1 - Core Services

- OpenShift End-to-End: Day 2 - Cluster Customization 🌟

OCP 4 Overview

- Result of RedHat’s (now IBM) acquisition of CoreOS -> RHCOS (Red Hat Enterprise Linux CoreOS)

- Merge of two leading Kubernetes distributions, Tectonic and OpenShift:

- CoreOS Tectonic:

- Operator Framework

- quay.io container build and registry service

- Stable tiny Linux distribution with ignition bootstrap and transaction-based update engine.

- OpenShift:

- Wide enterprise adoption

- Security

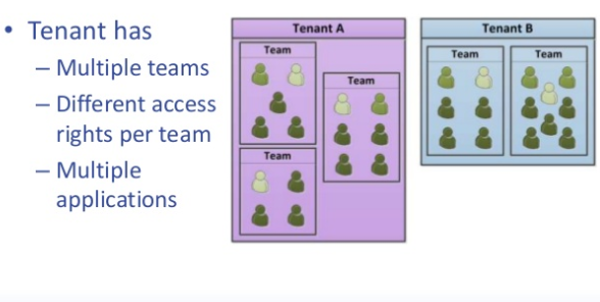

- Multi-tenancy features (self-service)

- CoreOS Tectonic:

- OpenShift 4 is built on top of Kubernetes 1.13+

- Roadmap

- Release Notes

Three New Functionalities

- Self-Managing Platform

- Application Lifecycle Management (OLM):

- OLM Operator:

- Responsible for deploying applications defined by ClusterServiceVersion (CSV) manifest.

- Not concerned with the creation of the required resources; users can choose to manually create these resources using the CLI, or users can choose to create these resources using the Catalog Operator.

- Catalog Operator:

- Responsible for resolving and installing CSVs and the required resources they specify. It is also responsible for watching CatalogSources for updates to packages in channels and upgrading them (optionally automatically) to the latest available versions.

- A user that wishes to track a package in a channel creates a Subscription resource configuring the desired package, channel, and the CatalogSource from which to pull updates. When updates are found, an appropriate InstallPlan is written into the namespace on behalf of the user.

- OLM Operator:

- Automated Infrastructure Management (Over-The-Air Updates)

New Technical Components

- New Installer:

- Storage: Cloud integrated storage capability used by default via OCS Operator (Red Hat)

- There are a number of persistent storage options available to you through the OperatorHub / Storage vendors that don’t involve Red Hat, NFS or Gluster.

- Kubernetes-native persistent storage technologies available (non-RedHat solutions):

- Operators End-To-End!: responsible for reconciling the system to the desired state

- Cluster configuration kept as API objects that ease its maintenance (“everything-as-code” approach):

- Every component is configured with Custom Resources (CR) that are processed by operators.

- No more painful upgrades and synchronization among multiple nodes and no more configuration drift.

- List of operators that configure cluster components (API objects):

- API server

- Nodes via Machine API

- Ingress

- Internal DNS

- Logging (EFK) and Monitoring (Prometheus)

- Sample applications

- Networking

- Internal Registry

- Oauth (and authentication in general)

- etc

- Cluster configuration kept as API objects that ease its maintenance (“everything-as-code” approach):

- At the Node Level:

- RHEL CoreOS is the result of merging CoreOS Container Linux and RedHat Atomic host functionality and is currently the only supported OS to host OpenShift 4.

- Node provisioning with ignition, which came with CoreOS Container Linux

- Atomic host updates with rpm-ostree

- CRI-O as a container runtime

- SELinux enabled by default

- Machine API: Provisioning of nodes. Abstraction mechanism added (API objects to declaratively manage the cluster):

- Based on Kubernetes Cluster API project Cluster API is a Kubernetes sub-project focused on providing declarative APIs and tooling to simplify provisioning, upgrading, and operating multiple Kubernetes clusters.

- Provides a new set of machine resources:

- Machine

- Machine Deployment

- MachineSet:

- distributes easily your nodes among different Availability Zones

- manages multiple node pools (e.g. pool for testing, pool for machine learning with GPU attached, etc)

- Everything “just another pod”

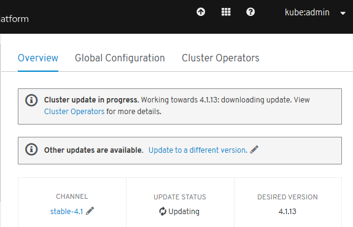

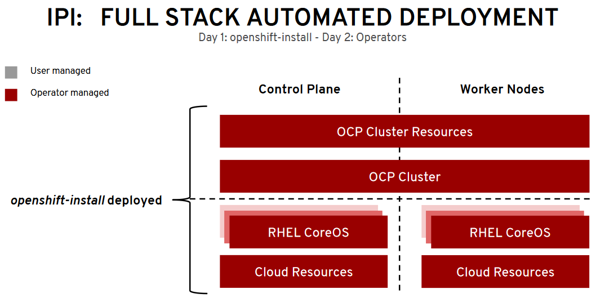

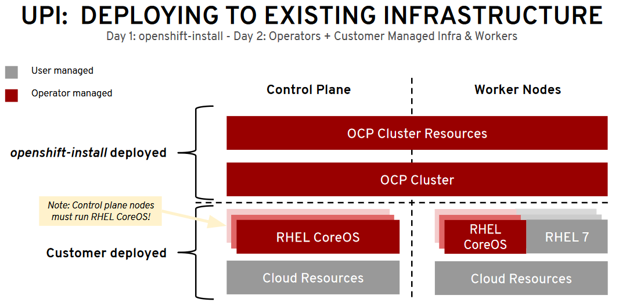

Installation and Cluster Autoscaler

- New installer openshift-install tool, replacement for the old Ansible scripts.

- 40 min (AWS). Terraform.

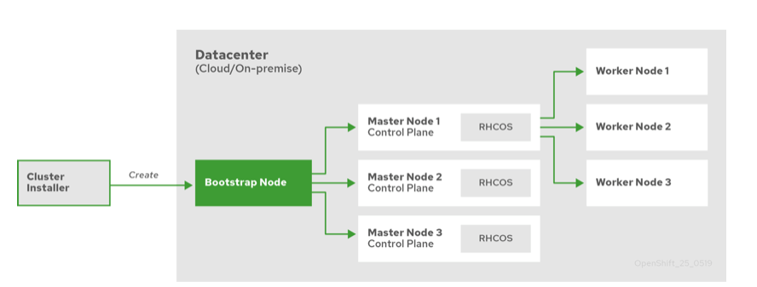

- 2 installation patterns:

- Installer Provisioned Infrastructure (IPI)

- User Provisioned Infrastructure (UPI)

- The whole process can be done in one command and requires minimal infrastructure knowledge (IPI):

openshift-install create cluster

IPI and UPI

- 2 installation patterns:

- Installer Provisioned Infrastructure (IPI): On supported platforms, the installer is capable of provisioning the underlying infrastructure for the cluster. The installer programmatically creates all portions of the networking, machines, and operating systems required to support the cluster. Think of it as best-practice reference architecture implemented in code. It is recommended that most users make use of this functionality to avoid having to provision their own infrastructure. The installer will create and destroy the infrastructure components it needs to be successful over the life of the cluster.

- User Provisioned Infrastructure (UPI): For other platforms or in scenarios where installer provisioned infrastructure would be incompatible, the installer can stop short of creating the infrastructure, and allow the platform administrator to provision their own using the cluster assets generated by the install tool. Once the infrastructure has been created, OpenShift 4 is installed, maintaining its ability to support automated operations and over-the-air platform updates.

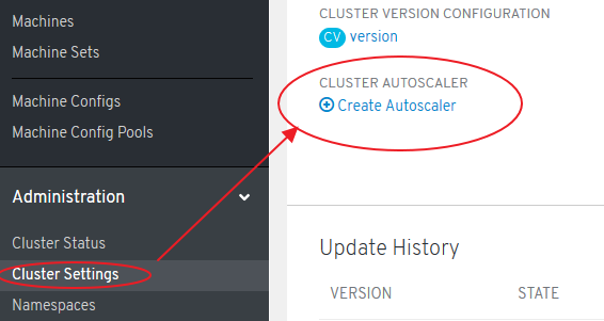

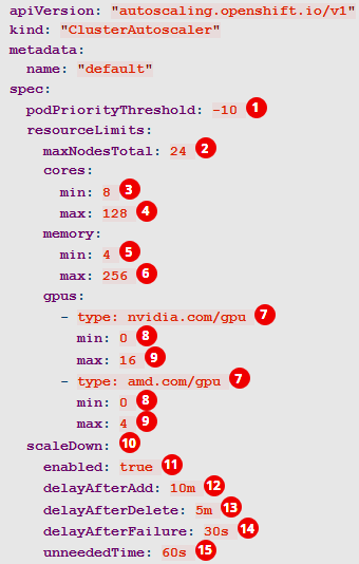

Cluster Autoscaler Operator

- Adjusts the size of an OpenShift Container Platform cluster to meet its current deployment needs. It uses declarative, Kubernetes-style arguments

- Increases the size of the cluster when there are pods that failed to schedule on any of the current nodes due to insufficient resources or when another node is necessary to meet deployment needs. The ClusterAutoscaler does not increase the cluster resources beyond the limits that you specify.

- A huge improvement over the manual, error-prone process used in the previous version of OpenShift and RHEL nodes.

Operators

Introduction

- Core of the platform

- The hierarchy of operators, with clusterversion at the top, is the single door for configuration changes and is responsible for reconciling the system to the desired state.

- For example, if you break a critical cluster resource directly, the system automatically recovers itself.

- Similarly to cluster maintenance, operator framework used for applications. As a user, you get SDK, OLM (Lifecycle Manager of all Operators and their associated services running across their clusters) and embedded operator hub.

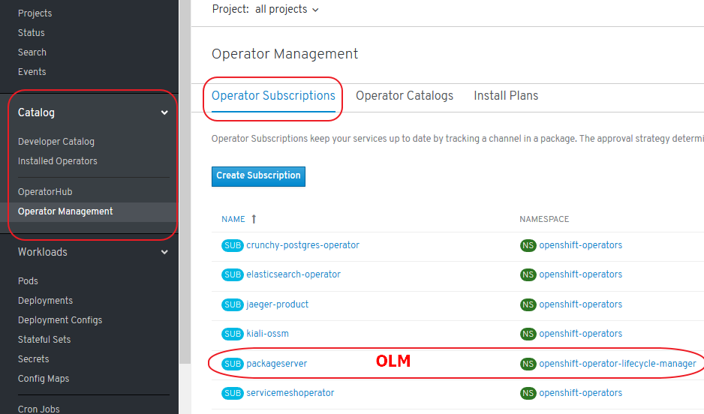

- OLM Arquitecture

- Adding Operators to a Cluster (They can be added via CatalogSource)

- The supported method of using Helm charts with Openshift is via the Helm Operator

- twitter.com/operatorhubio

- View the list of Operators available to the cluster from the OperatorHub:

$ oc get packagemanifests -n openshift-marketplace

NAME AGE

amq-streams 14h

packageserver 15h

couchbase-enterprise 14h

mongodb-enterprise 14h

etcd 14h myoperator 14h

...

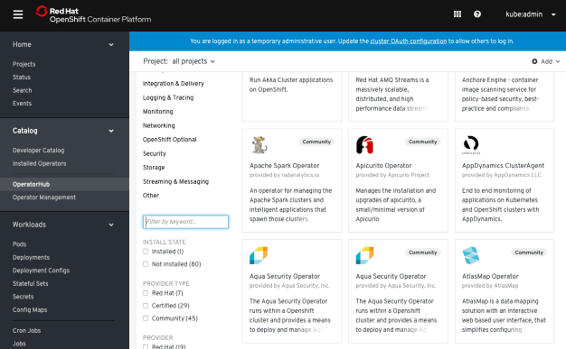

Catalog

- Developer Catalog

- Installed Operators

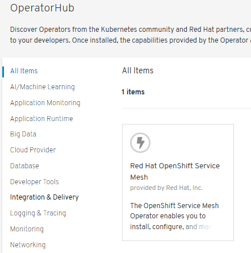

- OperatorHub (OLM)

- Operator Management:

- Operator Catalogs are groups of Operators you can make available on the cluster. They can be added via CatalogSource (i.e. “catalogsource.yaml”). Subscribe and grant a namespace access to use the installed Operators.

- Operator Subscriptions keep your services up to date by tracking a channel in a package. The approval strategy determines either manual or automatic updates.

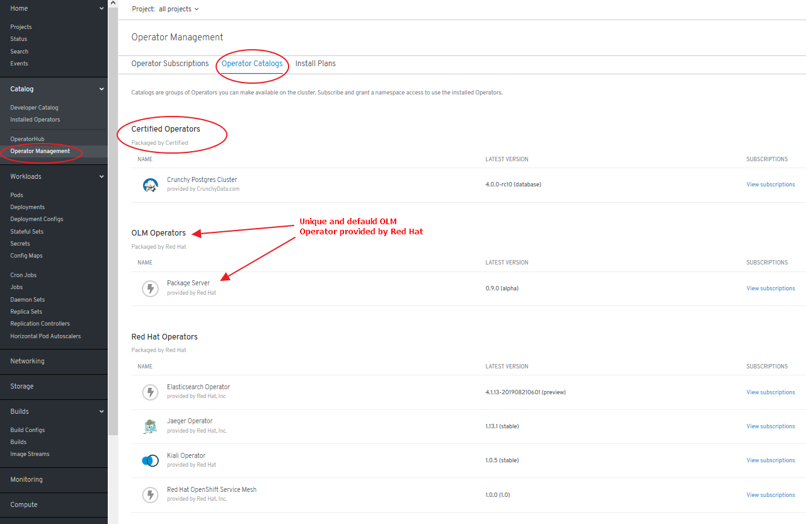

Certified Opeators, OLM Operators and Red Hat Operators

- Certified Operators packaged by Certified:

- Not provided by Red Hat

- Supported by Red Hat

- Deployed via “Package Server” OLM Operator

- OLM Operators:

- Packaged by Red Hat

- “Package Server” OLM Operator includes a CatalogSource provided by Red Hat

- Red Hat Operators:

- Packaged by Red Hat

- Deployed via “Package Server” OLM Operator

- Community Edition Operators:

- Deployed by any means

- Not supported by Red Hat

Deploy and bind enterprise-grade microservices with Kubernetes Operators

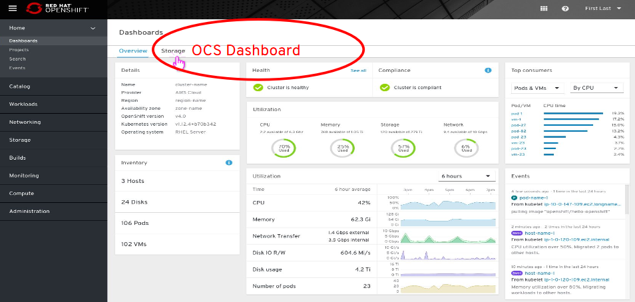

OpenShift Container Storage Operator (OCS)

OCS 3 (OpenShift 3)

- OpenShift Container Storage based on GlusterFS technology.

- Not OpenShift 4 compliant: Migration tooling will be available to facilitate the move to OCS 4.x (OpenShift Gluster APP Mitration Tool).

OCS 4 (OpenShift 4)

- OCS Operator based on Rook.io with Operator LifeCycle Manager (OLM).

- Tech Stack:

- Rook (don’t confuse this with non-redhat “Rook Ceph” -> RH ref).

- Replaces Heketi (OpenShift 3)

- Uses Red Hat Ceph Storage and Noobaa.

- Red Hat Ceph Storage

- Noobaa:

- Red Hat Multi Cloud Gateway (AWS, Azure, GCP, etc)

- Asynchronous replication of data between my local ceph and my cloud provider

- Deduplication

- Compression

- Encryption

- Rook (don’t confuse this with non-redhat “Rook Ceph” -> RH ref).

- Backups available in OpenShift 4.2+ (Snapshots + Restore of Volumes)

- OCS Dashboard in OCS Operator

Cluster Network Operator (CNO) & Routers

- Cluster Network Operator (CNO): The cluster network is now configured and managed by an Operator. The Operator upgrades and monitors the cluster network.

- Router plug-ins in OCP3:

- A « route » is the external entrypoint to a Kubernetes Service. This is one of the biggest differences between Kubernetes and OpenShift Enterprise (= OCP) and origin.

- OpenShift router has the endpoints as targets and therefore the pod of the application.

- Shared/Stikcy sessions are enabled by default

- HAProxy template router (default router): HTTP(s) & TLS-enabled traffic via SIN.

- F5 BIG-IP Router plug-in integrates with an existing F5 BIG-IP system in your environment

- Since the 9th May 2018, NGINX is also available as « router ».

- Routers in OCP4:

- Ingress Controller is the most common way to allow external access to an OpenShift Container Platform cluster

- Configuring Ingress Operator in OCP4

- Limited to HTTP, HTTPS using SNI, and TLS using SNI (sufficient for web applications and services)

- Has two replicas by default, which means it should be running on two worker nodes.

- Can be scaled up to have more replicas on more nodes.

- The Ingress Operator implements the ingresscontroller API and is the component responsible for enabling external access to OpenShift Container Platform cluster services.

- The operator makes this possible by deploying and managing one or more HAProxy-based Ingress Controllers to handle routing.

- Network Security Zones in Openshift (DMZ)

- openshift.com: Global Load Balancer for OpenShift clusters: an Operator-Based Approach

oc describe clusteroperators/ingress

oc logs --namespace=openshift-ingress-operator deployments/ingress-operator

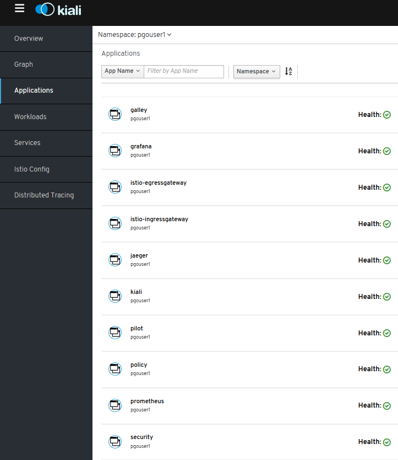

ServiceMesh Operator

- ServiceMesh: Istio + kiali + Jaeger

- ServiceMesh Community Edition: github.com/maistra/istio

- Red Hat community installer compliant with OCP 4.1: maistra.io/docs/getting_started/install

- Outcome: publicly known errors in 2 or 3 components.

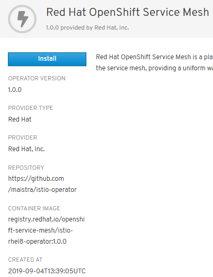

- Certified ServiceMesh Operator

- ServiceMesh GA in September 2019 (available in OperatorHub):

- Certified & Packaged by Red Hat

- “One-click” deployment

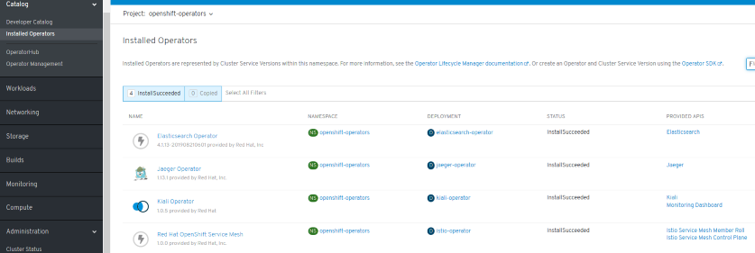

- Preparing to install Red Hat OpenShift Service Mesh. To install the Red Hat OpenShift Service Mesh Operator, you must first install these Operators:

- Elasticsearch

- Jaeger

- Kiali

- Do not install Community versions of the Operators. Community Operators are not supported.

Serverless Operator (Knative)

- Operator install on OperatorHub.io

- Knative Eventing (Camel-K, Kafka, Cron, etc)

- Integration with Openshift ServiceMesh, Logging, Monitoring.

- openshift.com/learn/topics/serverless

- redhat-developer-demos.github.io/knative-tutorial

Monitoring and Observability

Grafana

- Integrated Grafana v5.4.3 (deployed by default):

- Monitoring -> Dashboards

- Project “openshift-monitoring”

- grafana.com/docs/v5.4/

Prometheus

- Integrated Prometheus v2.7.2 (deployed by default):

- Monitoring -> metrics

- Project “openshift-monitoring”

- prometheus.io/docs/prometheus/2.7/getting_started/

Alerts and Silences

- Integrated Alertmanager 0.16.2 (deployed by default):

- Monitoring -> Alerts

- Monitoring -> Silences

- Silences temporarily mute alerts based on a set of conditions that you define. Notifications are not sent for alerts that meet the given conditions.

- Project “openshift-monitoring”

- prometheus.io/docs/alerting/alertmanager/

Cluster Logging (EFK)

- thenewstack.io: Log Management for Red Hat OpenShift

- EFK: Elasticsearch + Fluentd + Kibana

- Cluster Logging EFK not deployed by default

- As an OpenShift Container Platform cluster administrator, you can deploy cluster logging to aggregate logs for a range of OpenShift Container Platform services.

- The OpenShift Container Platform cluster logging solution requires that you install both the Cluster Logging Operator and Elasticsearch Operator. There is no use case in OpenShift Container Platform for installing the operators individually. You must install the Elasticsearch Operator using the CLI following the directions below. You can install the Cluster Logging Operator using the web console or CLI.

Deployment procedure based on CLI + web console:

- docs.openshift.com/container-platform/4.4/logging/cluster-logging-deploying.html

- Elasticsearch Operator must be installed in Project “openshift-operators-redhat”

- Cluster Logging Operator must be deployed in Project “openshift-logging”

- CatalogSourceConfig added to enable Elasticsearch Operator on the cluster

- etc.

| OCP Release | Elasticsearch | Fluentd | Kibana | EFK deployed by default |

|---|---|---|---|---|

| OpenShift 3.11 | 5.6.13.6 | 0.12.43 | 5.6.13 | No |

| OpenShift 4.1 | 5.6.16 | ? | 5.6.16 | No |

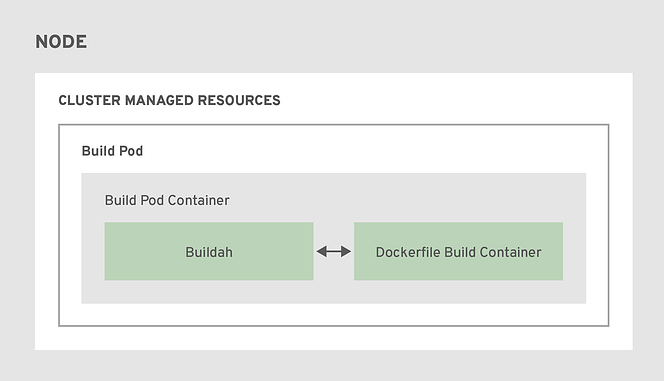

Build Images. Next-Generation Container Image Building Tools

- Redesign of how images are built on the platform.

- Instead of relying on a daemon on the host to manage containers, image creation, and image pushing, we are leveraging Buildah running inside our build pods.

- This aligns with the general OpenShift 4 theme of making everything “just another pod”

- A simplified set of build workflows, not dependent on the node host having a specific container runtime available.

- Dockerfiles that built under OpenShift 3.x will continue to build under OpenShift 4.x and S2I builds will continue to function as well.

- The actual BuildConfig API is unchanged, so a BuildConfig from a v3.x cluster can be imported into a v4.x cluster and work without modification.

- Podman & Buildah for docker users

- Openshift ImageStreams

- Openshift 4 image builds

- Custom image builds with Buildah

- Rootless podman and NFS

OpenShift Registry and Quay Registry

Local Development Environment

- For version 3 we have Container Development Kit (or its open source equivalent for OKD - minishift) which launches a single node VM with Openshift and it does it in a few minutes. It’s perfect for testing also as a part of CI/CD pipeline.

- Openshift 4 on your laptop: There is a working solution for single node OpenShift cluster. It is provided by a new project called CodeReady Containers.

- Procedure:

untar

crc setup

crc start

environment variables

oc login

GitOps Catalog

- github.com/redhat-cop/gitops-catalog Tools and technologies that are hosted on an OpenShift cluster. The GitOps Catalog includes kustomize bases and overlays for a number of OpenShift operators and applications.

OpenShift on Azure

- Introducing Azure Red Hat OpenShift on OpenShift 4 🌟

- dkrallis.wordpress.com: How to create an OpenShift Cluster in Azure and how you can interact with Azure DevOps environment – Part A

- developers.redhat.com: How to easily deploy OpenShift on Azure using a GUI, Part 1

OpenShift Youtube

- OpenShift Youtube

- youtube: Installing OpenShift 4 on AWS with operatorhub.io integration 🌟

- youtube: OpenShift 4 OAuth Identity Providers

- youtube: OpenShift on Google Cloud, AWS, Azure and localhost

- youtube: Getting Started with OpenShift 4 Security 🌟

- youtube playlist: London 2020 | OpenShift Commons Gathering 🌟 OCP4 Updates & Roadmaps, Customer Stories, OpenShift Hive (case study), Operator Ecosystem.

OpenShift 4 Training

- github.com: Openshift 4 training

- learn.openshift.com

- learn.crunchydata.com

- Red Hat Shares ― Learning Kubernetes

OpenShift 4 Roadmap

- blog.openshift.com: OpenShift 4 Roadmap (slides) - this link may change

- blog.openshift.com: OpenShift Container Storage (OCS 3 & 4 slides)

- This link is now broken. Grab a copy from here

- blog.openshift.com: OpenShift 4 Roadmap Update (slides)

- This link is now broken. Grab a copy from here

Kubevirt Virtual Machine Management on Kubernetes

- kubevirt.io 🌟

- Getting Started with KubeVirt Containers and Virtual Machines Together

- containerjournal.com: Red Hat Integrates KubeVirt With Kubernetes Management Platform From SAP

- kubermatic.com: Bringing Your VMs to Kubernetes With KubeVirt

- medium.com/adessoturkey: Create a Windows VM in Kubernetes using KubeVirt Windows VM in a Kubernetes Cluster. In this tutorial, you will learn how to run a Windows VM inside a KinD Cluster that is running on an Ubuntu machine

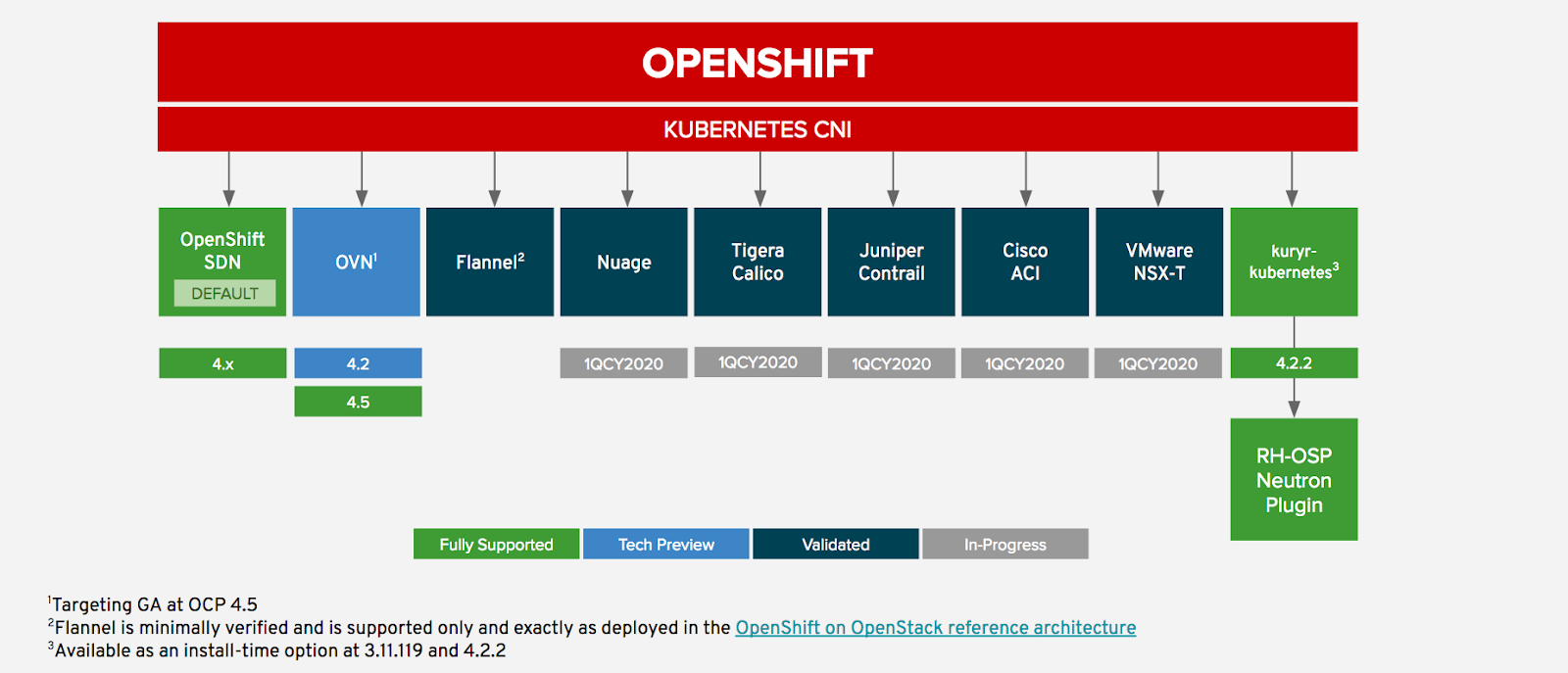

Networking and Network Policy in OCP4. SDN/CNI plug-ins

- Networking in OCP4

- Currently, the default OpenShift CNI is OpenShift SDN (network-policy), which configures an overlay network using Open vSwitch (OVS 2.11). The following diagram shows the CNI options for OpenShift and the status of each (supported, validated, etc. …):

- redhat.com: Network traffic control for containers in Red Hat OpenShift

Multiple Networks with SDN/CNI plug-ins. Usage scenarios for an additional network

- Understanding multiple networks In Kubernetes, container networking is delegated to networking plug-ins that implement the Container Network Interface (CNI). OpenShift Container Platform uses the Multus CNI plug-in to allow chaining of CNI plug-ins. During cluster installation, you configure your default Pod network. The default network handles all ordinary network traffic for the cluster. You can define an additional network based on the available CNI plug-ins and attach one or more of these networks to your Pods. You can define more than one additional network for your cluster, depending on your needs. This gives you flexibility when you configure Pods that deliver network functionality, such as switching or routing.

- You can use an additional network in situations where network isolation is needed, including data plane and control plane separation. Isolating network traffic is useful for the following performance and security reasons:

- Performance: You can send traffic on two different planes in order to manage how much traffic is along each plane.

- Security: You can send sensitive traffic onto a network plane that is managed specifically for security considerations, and you can separate private data that must not be shared between tenants or customers.

- All of the Pods in the cluster still use the cluster-wide default network to maintain connectivity across the cluster. Every Pod has an eth0 interface that is attached to the cluster-wide Pod network. You can view the interfaces for a Pod by using the oc exec -it

– ip a command. If you add additional network interfaces that use Multus CNI, they are named net1, net2, …, netN. - To attach additional network interfaces to a Pod, you must create configurations that define how the interfaces are attached. You specify each interface by using a Custom Resource (CR) that has a NetworkAttachmentDefinition type. A CNI configuration inside each of these CRs defines how that interface is created.

- openshift.com: Demystifying Multus 🌟

Istio CNI plug-in

- Istio CNI plug-in 🌟 Red Hat OpenShift Service Mesh includes CNI plug-in, which provides you with an alternate way to configure application pod networking. The CNI plug-in replaces the init-container network configuration eliminating the need to grant service accounts and projects access to Security Context Constraints (SCCs) with elevated privileges.

Calico CNI Plug-in

- Operator-based Calico CNI Plug-In is Supported on OpenShift 4 🌟

- docs.projectcalico.org: Install an OpenShift 4 cluster with Calico

Third Party Network Operators with OpenShift

Ingress Controllers in OpenShift using IPI

Storage in OCP 4. OpenShift Container Storage (OCS)

Red Hat Advanced Cluster Management for Kubernetes

- Red Hat Advanced Cluster Management for Kubernetes 🌟

- containerjournal.com: Red Hat Simplifies Kubernetes Cluster Management

OpenShift Kubernetes Engine (OKE)

Red Hat CodeReady Containers. OpenShift 4 on your laptop

- Homepage

- developers.redhat.com: Developing applications on Kubernetes 🌟

- crc-org/crc: Getting Started Guide 🌟

- Red Hat OpenShift 4.2 on your laptop: Introducing Red Hat CodeReady Containers

- dzone: Code Ready Containers - Decision Management Developer Tools Update

- Overview: running crc on a remote server

- dzone: Code Ready Containers: Installing Process Automation Learn how to make better use of Red Hat’s Code Ready Containers platform by installing process automation from a catalog.

- developers.redhat.com: How to install CodeReady Workspaces in a restricted OpenShift 4 environment

- Install Red Hat OpenShift Operators on your laptop using Red Hat CodeReady Containers and Red Hat Marketplace

- schabell.org: How to setup OpenShift Container Platform 4.5 on your local machine in minutes

- dzone: CodeReady Containers - Getting Started with OpenShift 4.5 and Process Automation Tooling What can you do with the fully stocked container registry provided to you? There is no better way to learn about container technologies, cloud native methods…

OpenShift Hive: Cluster-as-a-Service. Easily provision new PaaS environments for developers

- blog.openshift.com: openshift hive cluster as a service

- github.com/openshift/hive API driven OpenShift 4 cluster provisioning and management. Hive is an operator which runs as a service on top of Kubernetes/OpenShift. The Hive service can be used to provision and perform initial configuration of OpenShift clusters. OpenShift Hive is an operator which enables operations teams to easily provision new PaaS environments for developers improving productivity and reducing process burden due to internalIT regulations.

- youtube: how to deliver OpenShift as a service (just like Red Hat)

OpenShift 4 Master API Protection in Public Cloud

- blog.openshift.com: Introducing Red Hat OpenShift 4.3 to Enhance Kubernetes Security 🌟 OpenShift 4.3 adds new capabilities and platforms to the installer, helping customers to embrace their company’s best security practices and gain greater access control across hybrid cloud environments. Customers can deploy OpenShift clusters to customer-managed, pre-existing VPN / VPC (Virtual Private Network / Virtual Private Cloud) and subnets on AWS, Microsoft Azure and Google Cloud Platform. They can also install OpenShift clusters with private facing load balancer endpoints, not publicly accessible from the Internet, on AWS, Azure and GCP.

- containerjournal.com: Red Hat Delivers Latest Kubernetes Enhancements

- Create an OpenShift 4.2 Private Cluster in AWS 🌟

- cloud.ibm.com: openshift-security

- docs.aporeto.com: OpenShift Master API Protection

Backup and Migrate to OpenShift 4

OKD4. OpenShift 4 without enterprise-level support

- OKD.io: The Community Distribution of Kubernetes that powers Red Hat OpenShift.

- docs.okd.io 🌟

- GitHub: OKD4

- youtube.com: OKD4

- OKD4 Roadmap: The Road To OKD4: Operators, FCOS and K8S 🌟

- github.com: OKD 4 Roadmap

- youtube.com: How To Install OKD4 on GCP - Vadim Rutkovsky (Red Hat)

- blog.openshift.com: Guide to Installing an OKD 4.4 Cluster on your Home Lab

- okd4-upi-lab-setup: Building an OpenShift - OKD 4.X Lab Installing OKD4.X with User Provisioned Infrastructure. Libvirt, iPXE, and FCOS

- redhat.com: How to run a Kubernetes cluster on your laptop 🌟 Want containers? Learn how to set up and run a Kubernetes container cluster on your laptop with OKD.

- openshift.com: Deploy a multi-master OKD 4.5 cluster using a single command in ~30 minutes

- dustymabe.com: OpenShift OKD on Fedora CoreOS on DigitalOcean Part 4: Recorded Demo

- medium: Guide OKD 4.5 Single Node Cluster

OpenShift Serverless with Knative

- redhat.com: What is knative?

- developers.redhat.com: Serverless Architecture

- datacenterknowledge.com: Explaining Knative, the Project to Liberate Serverless from Cloud Giants

- Announcing OpenShift Serverless 1.5.0 Tech Preview – A sneak peek of our GA

- Serverless applications made faster and simpler with OpenShift Serverless GA

Helm Charts and OpenShift 4

- The supported method of using Helm charts with Openshift4 is via the Helm Operator

- youtube

- blog.openshift.com: Helm and Operators on OpenShift, Part 1

- blog.openshift.com: Helm and Operators on OpenShift, Part 2

Red Hat Marketplace

- marketplace.redhat.com 🌟 A simpler way to buy and manage enterprise software, with automated deployment to any cloud.

- developers.redhat.com: Building Kubernetes applications on OpenShift with Red Hat Marketplace

Kubestone. Benchmarking Operator for K8s and OpenShift

OpenShift Cost Management

- blog.openshift.com: Tech Preview: Get visibility into your OpenShift costs across your hybrid infrastructure 🌟

- Cost Management and OpenShift - Sergio Ocón-Cárdenas (Red Hat) 🌟

Operators in OCP 4

- OLM operator lifecycle manager

- Top Kubernetes Operators

- operatorhub.io

- learn.crunchydata.com

- developers.redhat.com: Operator pattern: REST API for Kubernetes and Red Hat OpenShift 🌟

- developers.redhat.com: 5 tips for developing Kubernetes Operators with the new Operator SDK

- medium: Using Kubernetes Operators to Manage the Lifecycle of AI Applications

Quay Container Registry

- Red Hat Introduces open source Project Quay container registry

- Red Hat Quay

- projectquay.io

- quay.io

- GitHub Quay (OSS)

- blog.openshift.com: Introducing Red Hat Quay

- operatorhub.io/operator/quay

- openshift.com: Keep Your Applications Secure With Automatic Rebuilds 🌟

- OpenShift Container Platform historically has addressed this challenge by using Image Streams. An image stream is an abstraction for referencing container images from within OpenShift while the referenced images are an image registry such as OpenShift internal registry, Quay, or other external registries. Image streams are capable of defining triggers which allow your builds and deployments to be automatically invoked when a new version of an image is available in the backing image registry. This in effect enables rebuilding all images that are based on a particular base image as soon as a new version of the base image is available in the Red Hat container catalog and therefore updates all images with the latest bug, CVE, and vulnerability fixes delivered in the latest base image. The challenge, however, is that this capability is limited to BuildConfigs in OpenShift and does not allow more complex workflows to be triggered when images are updated in the Red Hat container catalog. Furthermore, it is also limited to the scope of a single cluster and its internal OpenShift registry.

- Fortunately, though, using Red Hat Quay as a central registry in combination with OpenShift Pipelines enables infinite possibilities in designing sophisticated workflows for ensuring a secure software supply chain and automatically performing any set of actions whenever images are pushed, updated, or security vulnerabilities are discovered in the Red Hat container catalog.

- In this blog post, we will highlight how Red Hat Quay can be integrated with Tekton pipelines to trigger application rebuilds when images are updated in the Red Hat container catalog. At a high level, the flow will look like this:

- medium: Securing Containers with Red Hat Quay and Clair — Part I

Application Migration Toolkit

- Red Hat Application Migration Toolkit is an assembly of open source tools that enables large-scale application migrations and modernizations. The tooling consists of multiple individual components that provide support for each phase of a migration process.

- windup upstream project for Red Hat Application Migration Toolkit

- RHAMT in Github Actions You can embed the Migration Toolkit for Application MTA (now still RHAMT) in your GitHub to ensure your app is JEE / Tomcat compliant (and more …)

- Migrate your Java apps to containers with Migration Toolkit for Applications 5.0

- developers.redhat.com: Spring Boot to Quarkus migrations and more in Red Hat’s migration toolkit for applications 5.1.0

Developer Sandbox

- developers.redhat.com: Operator pattern: REST API for Kubernetes and Red Hat OpenShift 🌟

- developers.redhat.com: Welcome to the Developer Sandbox for Red Hat OpenShift Get free access to the Developer Sandbox for Red Hat OpenShift and deploy your application code as a container on this self-service, cloud-hosted experience. Skip installations and deployment and jump directly into OpenShift.

OpenShift Topology View

OpenBuilt Platform for the Construction Industry

- OpenBuilt

- infoq.com: IBM, Red Hat and Cobuilder Develop OpenBuilt, a Platform for the Construction Industry

OpenShift AI

Scripts

Slides

Click to expand!

Tweets

Click to expand!

The GUI with @openshift that shows services, and connections between pods, is so cool. Such a great tool to help understand whats happening instead of a bazillion #CuddleKube commands! #CFD12 pic.twitter.com/3XQjScS6TM

— Nathan Bennett (@vNathanBennett) November 4, 2021

Why OpenShift? Helm Repositories - OpenShift is focused on operators, but we can also easily add any Helm repo and then install a chart using the UI console🎥👇#openshift #kubernetes #helm pic.twitter.com/uxK43h8U7l

— Piotr Mińkowski (@piotr_minkowski) September 20, 2023